Looks great. No buttons on the unit? Can I get one to test?

Except for records, I don't see any advantage in 18 Hz. Just makes the files 18x larger.

There is 1 button on the unit, it is locate on the side of the unit.

It turns the unit on and off, but since last week it is also possible to do a "soft reset", it only deletes the values on the display but keeps logging to the same file it created during satellite lock.

My idea is to change the position to the top as it is easier to waterproof such button.

There is no option to buy units atm, before I start production in any form I will request technical approval from GP3S.

If I can't replace the GT-31 with this device, it will be easier to use a Suunto.

But I have great faith in the unit ![]()

Okay Gurus!

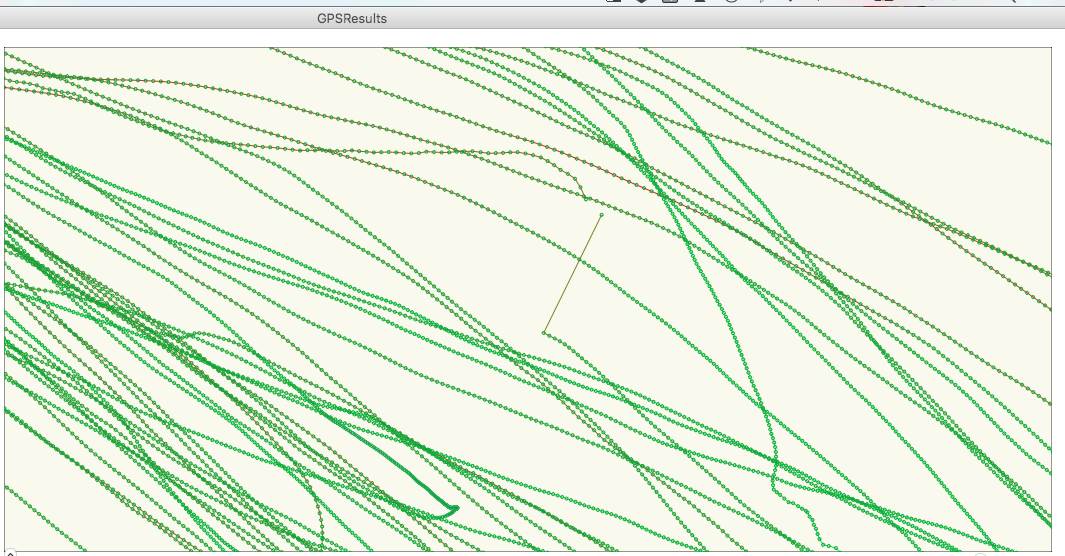

I'm a little perplexed with the below image taken from last Sunday's session. I have a sneaky suspicion that my GW-52 was 'shadowed' from the satellites and therefore the track has skipped but wouldn't mind it being confirmed regardless. This is not the only 'skip' however is certainly the most pronounced.

Both the Google Earth and GPS Results show the same track error. If this is the case, I will consider placing the Aquapac in a slightly better location.

Yeah I know that I should be discussing this with my team Captain however I think he's still recovering from his first venture into the deep deep waters of St Georges Basin last week! Best not to disturb the sleeping bear... ![]()

Thanks in advance for any constructive feedback.

Quote from Manfred: "Running the GW 52 at 1Hz to match the GT31 also makes no sense because of the missing antialiasing filters - the units have to run with the highest frequency possible (GW 52, Thingsee: 10Hz) to avoid this, because simple decimation of the data without filtering renders the data useless..."

There are quite a few statements here that I would like to address - to help keep context/flow of what I am writing, I will address them one at a time.

"renders the data useless" is, at best, an extreme overstatement. I would put it into the same category as "speed below 5 knots should not count towards distance". It's a personal opinion, which is based on some rational arguments, but it's not the one and only truth. Remember you are quoting a German engineer!

Ignoring speed below 5 knots has always resulted in a discussion. In particular if you happen to go out for a sail in 10 knots with a 7m sail, then drift down the course -> you *are* still sailing. That is why GPSResults allows you to use the change the filter parameters.

I'm pretty fed up with all the "our test show this and that and our rules are based on the results", without anyone ever bothering to make these data available. Setting up a WordPress or Google site to put the results of your tests takes just a few minutes.

All of this work was done by volunteers, Mathematicians, Doctor of Physics, Electronic Engineers, Programmers. What you are asking for is for us to do *more* work.

The information is available - maybe just be polite and ask for it ?!

Manfred has been a driving force behind going to higher data rates (5 Hz and 10 Hz). From the (mostly third-hand) talk I heard about this, I understand that the main reason to use higher data rates for measuring straight line speed (everything except alpha) is the reduction of random error through higher sampling rates. Assuming that measurement error is largely random, measuring at 5 Hz could give about 2-fold higher accuracy than measuring at 1 Hz.

Manfred definitely hasn't been the driving force. Since the days of the E-Trex and Foretrex 101, the whole community of GPS-Speedsurfing.com has always wanted high-Hz sample rates. To single out a specific person, does a disservice to the history of how we got here... its just that Tom and Manfred volunteered the most time.

[ Be aware that Manfred built the video-timing stuff used in many speed-sailing events... if anything he has a vested interest in making sure GPS technology *doesn't* replace his existing system. Please dont accuse/single-out a single person as having any other motive other than to better the existing technology. ]

Also, if you consider this forum as third-hand, I would suggest that you are incorrect. Andrew Daff in particular was there at the start, so anything that he has written is indeed first-hand. In my case, I came in late to the party, around the time where the Foretrex 201 was the GPS model of choice.

If the GW-52 units would have proper filters when running at 1 Hz, then the accuracy at 1 Hz could be very close to the accuracy at 5 Hz. Simple averages would do most of the trick, Kalman filters (which are typically used in GPS units) would be even better. But I guess the comment about the "missing antialiasing filters" indicates that the GW-52 does not use such filters; instead, it may simply discard 4 of 5 data points, and log the 5th. This is the easiest way to implement 1 Hz recording on 5 Hz units, so there is a good chance it's done this way.

A traditional Kalman filter is an infinite-impulse-response filter - as such, it is very well suited to general purpose filtering of GPS data when used for everyday usage (walking, driving, etc). However it negatively affects short-window measurements - indeed a 1Hz Kalman filter in a consumer grade GPS could have significant sample-bias from measurements taken many seconds prior.

The work on the GT-31 focused around understanding how the 1Hz measurements are created. Using a number of apparatus, we were able to deduce that there was likely to be a Kalman filter [ as opposed to some other filter ] and it and it appears to be modified to cut-off history [ thus making it a finite-impulse-response filter ].

Every digital-system must by definition must include some type of anti-aliasing filter - as that is one [ of the many ] elements of using digital technology to sample the real-world. The statement that you quoted doesn't explain what you are trying to say. Can you elaborate on this point?

One of the statements you make below and in subsequent posts, needs to be mentioned here. The point of high-Hz sampling is specifically "because we dont know what is really happening". When we take a scientific measurement, we must always provide a methodology which includes any assumptions. When we performed the various tests, we did identify that we couldn't see what was happening at the microscopic level (aka sub-1-second). This *is* the driving point behind why we want high-Hz GPS's.

As a corollary... instead of having 1Hz samples, why dont we just use 0.5Hz samples, as that would suffice for the 2-second category? Going to the extreme, why dont we just take the first point and the last point of two samples that are 10 seconds apart? We dont use 10-seconds as it doesn't tell us a) what is our peak speed b) which 10-second window do we choose to start at. Going subsecond is just the same thing, but with a smaller time-window. Thus we use 1Hz because that is the best that the consumer-grade technology could provide in a cheap package.

I mentioned testing... As an engineer you will be aware that it is important that we can implement test cases that accurately reflect the result that we are trying to verify. I list here a basic description of some of the tests performed:

- Walk 10 m watching the GPS, using a tin-can quickly put the GPS inside it, then tun 90deg and continue walking -> after a few seconds remove the GPS from the can. Ideally the track log should show zero/null datapoints as soon as the GPS is put into the can; it should then show the new datapoints "somewhere else". What you *shouldn't see* is the device playing catch-up or any other non-zero-datapoints while it was inside the can. Note here specifically is where Kalman filters arn't necessarily a good thing [ or at least they need to not have long history ]. This idea came from Ian Knight.

- One of the apparatus was a rotating-arm with a variable rotation rate - this was used to measure the aliasing. Manfred. [ I was on the email discusion in the early days and have seen photo's of this rig. ]

- Another apparatus was a linear oscillator - again used to measure aliasing, but also over-shoot. Tom Chalko.

- Tom mounted quite a few GPS's to his car, then drove many hundreds of km's, then performed Fourier analysis on the error values. What this showed was the max-error value quoted by the manufacturers, is actually far worse than what we are statistically able to measure [ with say over a million points ].

... and many others.

If we assume that the 1 Hz recording in the GW-52 is indeed implemented in this most simplistic way, the effect of the sub-sampling is that the error would increase by roughly 2-fold (square root of 5). Why anyone would call data with a 2-fold higher error margin "useless" is beyond me. Similarly, it is hard to imagine how the GW-52 can be significantly more accurate than the GT-31 or the Canmore at 5 Hz, but noticeably worse at 1 Hz. The only expected effect of using 1 Hz readings instead of 5 Hz reading would be that some teams might swap places in the monthly 2 second ranking (and perhaps in the alphas). The effect of running GT-31s in "power save" mode sure is a lot larger, and I did not see any warnings against that for years.

I'm not sure if this statement or is it a question - can you rephrase it?

Power-save has *always* been off the cards. This came from the original Foretrex 101 - it is documented everywhere. Ignoring that, if you think about even just a little bit, disabling power-save makes perfect sense vs. requiring timely and accurate data-logging.

There are a few practical reasons to use 1 Hz recording, though. The 5 Hz data have a lot of high-frequency oscillations that don't really add to the analysis. In "action replay" mode in GPSAR Pro, you can't really follow the speeds at 5 Hz, the vary too quickly; nor does viewing tracks only around the current point work as well, since the region shown is 5x shorter. But more important is the limited data capacity on the GW-52 of only 129 thousand data points. That's 7.3 hours at 5 Hz, but 35 hours at 1 Hz. When I record at 1 Hz, and can recharge the unit between sessions from any USB charger or an external battery. When I record at 5 Hz, I must hook the unit up to a computer running Windows between sessions, or I loose data - most of my sessions are longer than 4 hours. Depending on where I am, that can be pretty darn inconvenient.

You statement that 5Hz doesn't add to the analysis, really surprised me. The purpose of scientific endeavour is to understand everything - if we stopped when we thought it was good enough, then we wouldn't have identified sub-atomic particles or built the Large Hadron Collider.

Dont confuse data-analysis with data-visualisation. If played with a suitable player, then the higher-Hz GPS just allows you to "zoom in" to your track in real-time! ( Not GPSar as AFAIK it assumes that the the data-play-rate is linked to the frame-rate - they should be independently controllable. )

You are also conflating the memory-storage of a GW-52 specifically, and the ability to more accurately understand what is happening at the microsopic level by catpuring more data. Memory cards are cheap, it is only a matter time before that datapoint limitation is removed. Similarly battery capacity - just build a device with a bigger battery.

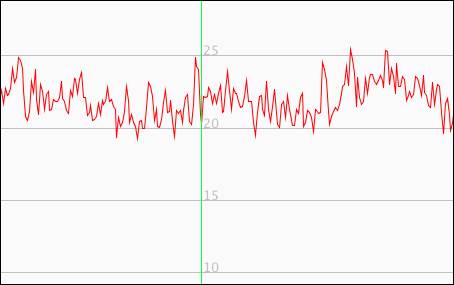

Spikes?????? are you referring to the 5hz ripple? The peaks can be almost a knot above the average. They're not what are normally referred to as "spikes".

The reason you don't see them at 1hz is they've been filtered out in the GT31 and averaged out in the GW52.

Locosys says in the case of the GW52 the 5hz and 1hz data is the same, it's just that 5 points have been averaged to produce one.

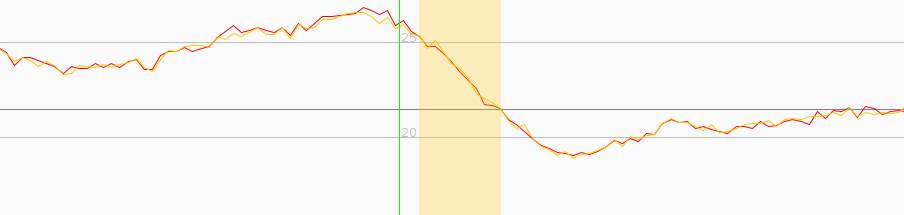

How much of the 5 Hz ripple is real, and how much noise, is an unanswered question, I think. Check out this section at 5 Hz and 1 Hz (down sampled):

... and thus why we want high-Hz GPS's.

If we dont know, we shouldn't make any assumptions at all.... We should use scientific method to learn about our environment at this sub-second level.

One knot corresponds to 10 cm in 0.2 seconds (2 Hz). My arm may well move 5 cm forward and back in that time. If look for the max at 5 Hz, you may get lucky and find 2 spots near the max that are both above the average, and get 1/2 or a whole knot more speed. You really want to claim that's faster?

The purpose of using GPS's to measure our windsurfing, is to avoid us having to setup any type of infrastructure. Since sailors can indeed do 50kn, it is possible to travel > 25m per second. ie: you have chosen the low-speed limit, when you should have chosen the upper-limit **

** Since SailRocket did > 65kn, it would seem prudent to make the GPS tech support this use-case... ie: > 35m/s

Indeed your question of "is it noise" is a good one... but since we cannot see past 5Hz, that question is unanswered.... but not for long, there are now 50Hz GPS chips are targeting the drone market.

Imagine the curve being flatter, because you stay closer to max speed for longer. Then, it is very likely that your top speed is peak-to-peak in the ripples. Measuring it this way goes against calling it "average" speed over 2 seconds. Using raw high frequency data only makes the problems for 2 second data worse. And we know that the 2 second category is the one that is most problematic, since spikes and other artifacts influence it the most.

It doesn't go against it at all [ assuming I understand what you are against ] - averaging your samples over a time-period is exactly what should be implemented. What you are describing is "measurement bias" - more samples in a given window, reduces the effect of measurement bias.

Spikes and so on, are result of a limitation in the measurement technology - as technology gets better, the sample-window can shrink accordingly. For example, a GPS from 1990 has at best say 15m resolution by sitting still for a long time. Now your phone can get you to within a few meters almost instantly.

Note that I dont know what you mean by raw high-frequency data. GPS's cannot take a doppler measurement "instantly" ... if for no other reason that the PLL requires sufficient time to measure that carrier-wave. [ Even moving to RTK still requires part of the carrier-wave to be measured. ]

If the 1 Hz data on the GW-52 are indeed averaged, as Locosys says (and I have no reason to doubt their statement), then using the GW-52 at 1 Hz will give more meaningful 2 second results than at 5 Hz. For all other categories, the differences will be extremely small - I bet smaller than the differences from wearing 2 units on different arms.

It wont. If for no other reason than the ability to better choose when to start your 2 or 10 second sample-window.

The differences are already small for all other categories... they were small when we used the Fortrex 201 to measure a 500m course, but that didn't stop us from moving to better technology.

So on the "noise" question. two GW52 in the same pouch on my head gave these tracks.

Note the ripple is very similar, they are basically in sync, made the think the ripple represented real speed.

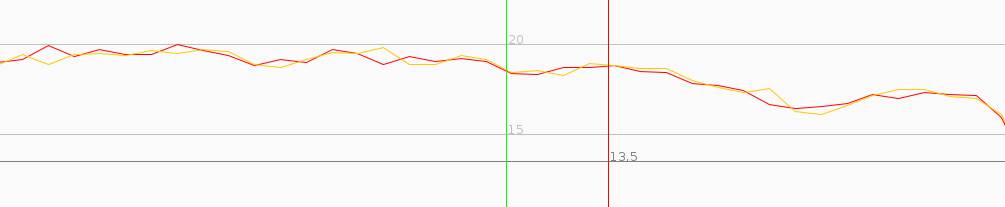

However when I had one on my head and one on my arm there is a slight difference.

Here the ripple is mainly out of phase. Makes me think this is indeed noise, and arm to head distance has some relationship to half wave length.

Any thoughts?

Okay Gurus!

I'm a little perplexed with the below image taken from last Sunday's session. I have a sneaky suspicion that my GW-52 was 'shadowed' from the satellites and therefore the track has skipped but wouldn't mind it being confirmed regardless. This is not the only 'skip' however is certainly the most pronounced.

Both the Google Earth and GPS Results show the same track error. If this is the case, I will consider placing the Aquapac in a slightly better location.

Yeah I know that I should be discussing this with my team Captain however I think he's still recovering from his first venture into the deep deep waters of St Georges Basin last week! Best not to disturb the sleeping bear... ![]()

Thanks in advance for any constructive feedback.

You'll see this on trackpoint data, doppler tracks are normally better.

And yes, no matter what your team captain says, I'll always recommend wearing the GPS where it gets the best sky view. Top of head is best, top of shoulder next best

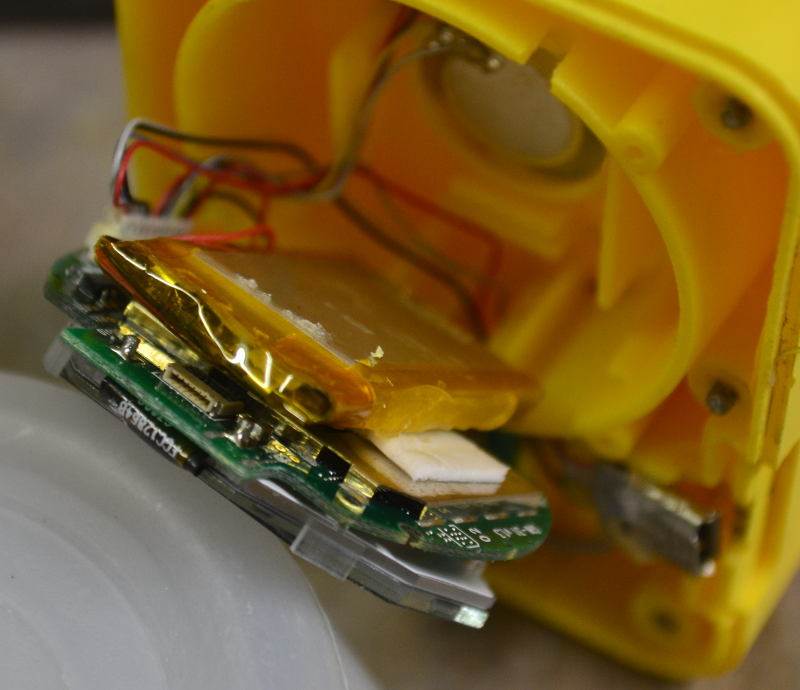

OK for all those who were wondering what's in the silly little plastic box.

AND I strongly urge you not to try this with a good unit!!!!!!!!!!!!!!!

This one has stopped communicating with the computer, CRC no longer helps, so if I can find a mini USB port I'll have a go at replacing it.

Sorry I neglected to get a pick of the cover with touch pad attached and ribbon cable hanging down.

The big problem is that it's all stuck together and both sides of the display stay with their respective halves. at the right hand side of the pic you can see a black flat section. Behind that is where the touch pad ribbon cable connects. Pulling the unit apart just drags this cable out of it's socket. To get it back in you have to lever the main display up against the glue holding it in so you can see the socket. Under there there seems to be 2 batteries, a small button type and a larger square one about the size of the antenna to the right of the pic. So it seems they have increased the antenna from when it was a watch.

Found one at Jaycar for $2.95, will have to wait until I get back from Albany next week.

Wish me luck, could be a quick fix, but I'll have to find the smallest tip for my soldering iron first!

PS, my screws have 1.5mm allen key heads.

>>>>

I've run GT31 and GW52 at 1 Hz for several sessions lately and pretty much the same speeds.

Also, no spikes at 1 Hz, which I do get at 5Hz

Spikes?????? are you referring to the 5hz ripple? The peaks can be almost a knot above the average. They're not what are normally referred to as "spikes".

The reason you don't see them at 1hz is they've been filtered out in the GT31 and averaged out in the GW52.

Locosys says in the case of the GW52 the 5hz and 1hz data is the same, it's just that 5 points have been averaged to produce one.

No, my spikes are not the 5Hz ripple.

They are 100kt + speeds.

I have also seen the loss of track which Tony Wills talks about later in the topic.

It's usually ok but I get this random wacko stuff. Never get this stuff with the GT31 which is on my arm.

The GW52 is on my head.

>>>

No, my spikes are not the 5Hz ripple.

They are 100kt + speeds.

I have also seen the loss of track which Tony Wills talks about later in the topic.

It's usually ok but I get this random wacko stuff. Never get this stuff with the GT31 which is on my arm.

The GW52 is on my head.

Any chance you could send me the file/s with it in. Daffy would be interested as well, I'll pass it on.

Just PM me I'll give you my email address.

Edit I've just checked some of my files and I do have some very high spikes, 85kts best so far.

But that's only in trackpoint data, doppler data is fine.

Beat it, just found trackpoints saying 372Kts, but doppler says 7kts.

DO NOT use trackpoint data!

OK for all those who were wondering what's in the silly little plastic box.

AND I strongly urge you not to try this with a good unit!!!!!!!!!!!!!!!

This one has stopped communicating with the computer, CRC no longer helps, so if I can find a mini USB port I'll have a go at replacing it.

Sorry I neglected to get a pick of the cover with touch pad attached and ribbon cable hanging down.

The big problem is that it's all stuck together and both sides of the display stay with their respective halves. at the right hand side of the pic you can see a black flat section. Behind that is where the touch pad ribbon cable connects. Pulling the unit apart just drags this cable out of it's socket. To get it back in you have to lever the main display up against the glue holding it in so you can see the socket. Under there there seems to be 2 batteries, a small button type and a larger square one about the size of the antenna to the right of the pic. So it seems they have increased the antenna from when it was a watch.

Can you take a picture from the PCB backside?

Would be fun to see what they used ![]()

yep, but you'll have to wait until next week sometime when I get the usb socket and pull it apart again.

So on the "noise" question. two GW52 in the same pouch on my head gave these tracks.

Note the ripple is very similar, they are basically in sync, made the think the ripple represented real speed.

Graphs like this one are quite useful, but we have to be careful with the conclusions we draw.

1. A lot (but not all) of the "ripple" looks very similar for both units. If the ripple would be caused by completely random measurement errors, then it should looks different, so we know that random errors are not the main cause of the ripples.

2. There are some differences between the tracks, so there definitely is some random noise in the data. At some spots in the image, only one tracks has ripples (e.g. the red track on the right side). But there are also spots where the ripples look similar, but the tracks are separated, for example to the left of the green line. That's also "random" measurement error.

3. One could do the math on the tracks (or a large collection of tracks collected this way) and come up with estimates how much of the ripples are due to "random error", and how much is "real". Eyeballing it from just the image above, I'd say about 1/4 to 1/3 is random.

4. Even the ripples we call "real" in step 3 could be due to systematic errors, for example atmospheric distortions of the GPS signal which will be practically identical for two units next to each other. But for the purpose of this discussion, let us assume such systematic errors are small.

However when I had one on my head and one on my arm there is a slight difference.

Here the ripple is mainly out of phase. Makes me think this is indeed noise, and arm to head distance has some relationship to half wave length.

This is a very interesting comparison. The difference between the ripples seems much larger than for the two units worn right next to each other on the head. I would also says that the ripples look different (and not just offset), but that's rather subjective.

The difference in the head-arm data is often in the range of 1/2 knot. For 5 Hz data, that is about 5 cm; at 18 knots, the distance traveled of in 0.2 seconds is 185 cm.

It is entirely possible that measured 0.5 knot difference between head and arm speed is real (or at least most of the it). For example, if the head bobs a bit in the direction of travel, that may easily be a 5 cm movement. Actually, a lot of the ripple may be due to small back-and forth movements of the head or shoulders. I one tried to put the GW-52 onto the mast about half way between boom and mast tip, and the ripples from the mast movement were quite extreme:

So, if small head of shoulder movements make a difference, should be base the 2 second speed on head or shoulder speed - or maybe average the 5 Hz data down to 1 Hz, which would make the speeds more similar?

Please do not misquote me. I said that Manfred was a driving force, not the driving force. Big difference. That assessment is based on the fact that Manfred developed one of the first 10 Hz GPS prototypes, among many other things. Many things you write about Manfred's roles in speedsurfing just underscores that. Nor did I say anything about his motives. I just happen to disagree with the one statement that Andrew said he made about 1Hz data on the GW-52, which is what my original post was about.

Again, you are putting words in my mouth. What I called "third-hand" was information about device decisions, and specifically about using the GW-52 in 1 Hz mode. If Andrew Daff cites a statement that Manfred Fuchs made, that is by definition second hand, not first hand. It would be first hand if Manfred would post here, but he does not. I am quite aware of Daffy's and Manfred's role in speedsurfing, and have a lot of respect for both of them. That does not mean that I have to agree with everything they say. I assume that Andrew cited a statement that Manfred made not very recently, as he remembered it. Memory can be a funny thing - I'll call that another degree of separation.

If we dont know, we shouldn't make any assumptions at all.... We should use scientific method to learn about our environment at this sub-second level.

In your statement, you are making an assumption - that sub-second measurements are better. Perhaps it is an assumption based on good arguments, and perhaps it has been supported by data, but it's still an assumption.

I can certainly agree with you that we should use scientific methods to evaluate GPS technology. Based from what I learned when getting my masters in biophysics and Ph.D. in experimental sciences, that involves making hypothesis, testing them, and publishing the results (along with enough information to reproduce the data). I find it amusing that you are all "scientific" about gathering more (i.e. higher frequency) data, but consider putting data somewhere where others can look at them as "too much work". And why would sending data by email to everyone who asks for them be less work? When the second person asks, it would be more!

Don't get me wrong, I am not advocating full scientific publication standards like peer-reviewed journals. But if someone has data which form the basis for a decision that a few thousand speed surfers should abide to, I think that such data should be put somewhere where everyone who wants can get them, together with a brief description of what was done and the conclusions drawn. That can be pretty informal - the primary purpose is to satisfy the curious minds, and perhaps to stimulate discussion. I have tried to do so with my evaluations of GPS units like the Flysight and the Canmore. I certainly appreciate that Raymond made the Gyro data available (although looking at them is a bit pointless if units are not available).

Sir, if anyone conflates, it is you. My post was entirely about the effects that the "5 Hz vs. 1 Hz on the GW-52" issue has for the vast majority of speed surfers who just want their results, and don't spend hours looking at their data on a sub-second level. I showed that the effect on accuracy is minimal on almost all categories, and small for 2 seconds, even if the GW-52 would use sampling. Since the G-52 uses averaging that even you agree should be used, the effect is darn close to zero.

Rambling about memory cards being cheap is completely useless if I cannot put one into the GW-52! It's still the only GPS unit that's commercially available and fully recommended, so we are stuck with it's very real limitations. And real they are - a friend just failed to get the "official" ice surfing speed record because of battery life limitations on the GW-52, even though he beat the old record by 2 knots. Loosing data because you like to sail a lot and/or forget to pull the data off every day is also a very real limitation. Using the GW-52 at 1 Hz reduces this problem, albeit only somewhat since you still have to remember to charge it...

Please do not misquote me. I said that Manfred was a driving force, not the driving force. Big difference. That assessment is based on the fact that Manfred developed one of the first 10 Hz GPS prototypes, among many other things. Many things you write about Manfred's roles in speedsurfing just underscores that. Nor did I say anything about his motives. I just happen to disagree with the one statement that Andrew said he made about 1Hz data on the GW-52, which is what my original post was about.

There have been many high-Hz GPS's before Manfred's attempt - for example the VBox. Since you have single out a specific person, it shows you are splitting hairs... it doesn't matter whether you use 'a' or 'the' -> it has the same meaning.

Again, you are putting words in my mouth. What I called "third-hand" was information about device decisions, and specifically about using the GW-52 in 1 Hz mode. If Andrew Daff cites a statement that Manfred Fuchs made, that is by definition second hand, not first hand. It would be first hand if Manfred would post here, but he does not. I am quite aware of Daffy's and Manfred's role in speedsurfing, and have a lot of respect for both of them. That does not mean that I have to agree with everything they say. I assume that Andrew cited a statement that Manfred made not very recently, as he remembered it. Memory can be a funny thing - I'll call that another degree of separation.

Andrew was quoting the manufacturer.

It does appear that you are singling out a specific person within this GPS community.

In your statement, you are making an assumption - that sub-second measurements are better. Perhaps it is an assumption based on good arguments, and perhaps it has been supported by data, but it's still an assumption.

I didn't say it was better or worse. I said we dont know, so lets measure it. There is no assuming anything.

I can certainly agree with you that we should use scientific methods to evaluate GPS technology. Based from what I learned when getting my masters in biophysics and Ph.D. in experimental sciences, that involves making hypothesis, testing them, and publishing the results (along with enough information to reproduce the data). I find it amusing that you are all "scientific" about gathering more (i.e. higher frequency) data, but consider putting data somewhere where others can look at them as "too much work". And why would sending data by email to everyone who asks for them be less work? When the second person asks, it would be more!

You are again spitting hairs.... putting information on a website - where it can be consumed by anyone/everyone - is significantly more work. You of all people should know this due to you effort of running a blog.

Don't get me wrong, I am not advocating full scientific publication standards like peer-reviewed journals. But if someone has data which form the basis for a decision that a few thousand speed surfers should abide to, I think that such data should be put somewhere where everyone who wants can get them, together with a brief description of what was done and the conclusions drawn. That can be pretty informal - the primary purpose is to satisfy the curious minds, and perhaps to stimulate discussion. I have tried to do so with my evaluations of GPS units like the Flysight and the Canmore. I certainly appreciate that Raymond made the Gyro data available (although looking at them is a bit pointless if units are not available).

By questioning what is already available [ and we should always question ], then the next bit of work is formalise the limited information. So you *are* advocating publishing standards. I think it should be formalised and then published.

Sir, if anyone conflates, it is you. My post was entirely about the effects that the "5 Hz vs. 1 Hz on the GW-52" issue has for the vast majority of speed surfers who just want their results, and don't spend hours looking at their data on a sub-second level. I showed that the effect on accuracy is minimal on almost all categories, and small for 2 seconds, even if the GW-52 would use sampling. Since the G-52 uses averaging that even you agree should be used, the effect is darn close to zero.

I thought your post was on "...one statement that Andrew said he made about 1Hz data on the GW-52, which is what my original post was about..."? .... but that is me now being pedantic... so lets not do that. You actually had many points - I suggest you re-read what you have written.

If a sailor only wants their end-results, it makes no difference whether is its 1Hz, 10HZ or 100Hz... the analysis is done for you... so that reasoning is immaterial.

However, that same sailor will also want to know their *best* speeds... even it that is 0.01 kns better due to 10Hz vs 1Hz.

Rambling about memory cards being cheap is completely useless if I cannot put one into the GW-52! It's still the only GPS unit that's commercially available and fully recommended, so we are stuck with it's very real limitations. And real they are - a friend just failed to get the "official" ice surfing speed record because of battery life limitations on the GW-52, even though he beat the old record by 2 knots. Loosing data because you like to sail a lot and/or forget to pull the data off every day is also a very real limitation. Using the GW-52 at 1 Hz reduces this problem, albeit only somewhat since you still have to remember to charge it...

I'm not going to have a big rant on why a record was not given.... I wasn't there, I dont know. However I dont see how a battery lifetime can reduce a run by two knots - did the device turn off during the record run?

The GW-52 isn't the only high-hz GPS... if you dont like it, use one of the others [ but it will cost you a lot of money ].

Andrew was quoting the manufacturer.

It does appear that you are singling out a specific person within this GPS community.

Why don't you go back and read Andrew's post, before posting such bulli**? Or at least look at the top of my original post, where I quote sailquik verbatim?

Look back at page 4 of this discussion, and you will find Andrew's post that formed the basis of this discussion:

Our testing shows that it is essential to use 5hz recording with this unit for best accuracy.

1hz recording does not give as good results as with the GT-31. GW-52 5hz recording is better than the GT-31 1hz.

A few posts later, in response to a "joke" that Roo made, he posted:

Not any kind of joke and that is so far from home as to be in the next Galaxy. Just in really bad taste and totally irrelevant to the topic. It would still be with a thousand smily faces. What is your problem anyhow?

Quote from Manfred: "Running the GW 52 at 1Hz to match the GT31 also makes no sense because of the missing antialiasing filters - the units have to run with the highest frequency possible (GW 52, Thingsee: 10Hz) to avoid this, because simple decimation of the data without filtering renders the data useless..."

This is a quote from Manfred, not from "the manufacturer", as you claim. I was addressing the content of this quote. If you'd bothered to look at the "Rules" page on the GPS Team Challenge, you would have seen:

The GW-52 is also approved for use in the same way using 5hz logging*.

and a bit further down:

* The best mode for accuracy in the GW-52 is setting it to 5hz logging. The 1hz setting should be avoided as it can introduce aliasing issues as the data does not appear to be processed in the same way as in the GT-31. 'Smart logging' should not be used for posting. (based on recommendation by Manfred Fuchs as a result of his testing).

I have questioned the requirement to use the GW-52 in 5 Hz mode at all times. I have given data that showed that the difference between 1 Hz and 5 Hz mode is very likely to be very small. I have also expressed that I do not find it acceptable that the data on which such decisions are based are not made available. It seems we will be expected to upload the tracks from every single session we post on GPS-TC soon - but making the data that policy decisions are based on available is "too much work"? That, again, is bulli**. Putting the data on ka72.com or some other site takes less time than evaluating the data.

If I appear to be singling out Manfred Fuchs, then only because his statements appear to be the only reason that GPSTC requires that the GW-52 in 5 Hz mode. I have absolutely no problem with suggesting to use it in 5 Hz mode. But I have given examples where using it in 1 Hz mode makes more sense, and I think that 1 Hz data from the GW-52 should officially be allowed on the GPS TC.

If anyone has data that show that the results of the GW-52 in 1 Hz mode are significantly less accurate than GT-31 data or 5 Hz data, I hereby ask politely to please send them to me (based on mathew's previous statement that such data would be made available if one asks politely). If email is easier than uploading to a public server, send me a private message here (Andrew and Manfred have my email address).

If anyone has two GW-52s and wants to help out, I'd certainly love getting some data with one unit in 1 Hz mode, and the other at 5 Hz. Even better if you also have a GT-31 to compare to.

I'm not going to have a big rant on why a record was not given.... I wasn't there, I dont know. However I dont see how a battery lifetime can reduce a run by two knots - did the device turn off during the record run?

Here's how: Dean used to use GT-31s and had a bunch of them, but most of them died recently, so he was left with just one. He bought 2 GW-52s, and encountered a variety of problems with them (check Discgolf4's posts). When he used them for ice surfing sessions, he found that they would typically die in the middle of the day, way before the end of a session. So he used one GW-52 and one GT-31 on the record day. It turns out that the record rules on GP3S have not been updated since 2013, so they do not say anything about using 2 different GPS units.

He got 54 knot in a bunch of runs. The GW-52 died as expected, but not before it had recorded a number of 54 knot runs. But when he submitted the data from both units to the record committee, he was denied the official record - he was told that both data sets have to be from the same kind of device. Seems like a rather arbitrary and questionable decision, given that nothing in the official rules states such a requirement.

Here's his video:

I received my GW52 in time to use for Windfest. While not as simple to use as the GT31, it did the job. I've sorted the connectivity issues with the computer, downloaded and analysed files successfully. I've only had one problem. After 2 of the sessions I was unable to unlock and turn off the unit. After driving to my accomodation very slowly, I was able to download the file, then clear the file and turn off the unit via the utility. I could then unlock and set up for the next session via the touch screen. Has anyone else had this problem?

Any chance you could send me the file/s with it in. Daffy would be interested as well, I'll pass it on. Just PM me I'll give you my email address. Edit I've just checked some of my files and I do have some very high spikes, 85kts best so far. But that's only in trackpoint data, doppler data is fine. Beat it, just found trackpoints saying 372Kts, but doppler says 7kts. DO NOT use trackpoint data!

<div class="forumPostActionsCell">

<div class="forumPostProfile">

<div class="forumPostActionsCell">

<div class="forumPostActions">Posted 11/3/2016, 9:34 pm ![]()

![]()

![]()

![]()

Can't find the files. I guess I dumped them due to the spikes. I was running GT31 at the same time and used those files, dumped the duds. Will save any future spiky jobs.

Yes, mine had a bad case of internal condensation last week. I couldn't get it to unlock, like you it came good once connected to the computer. Once the condensation cleared it operated notmally.

But for some reason, unlocking seems the hardest function to perform, it often takes me 5 or more tries to get it to happen, but it does seem to be improving with time, either that or my fingers are learning what to do.

When I pulled mine apart last week, I could see the touch areas on the face. They are very close to the rim, so I think the closer to the rim you touch the better the response will be.

Yes, mine had a bad case of internal condensation last week. I couldn't get it to unlock, like you it came good once connected to the computer. Once the condensation cleared it operated notmally.

But for some reason, unlocking seems the hardest function to perform, it often takes me 5 or more tries to get it to happen, but it does seem to be improving with time, either that or my fingers are learning what to do.

When I pulled mine apart last week, I could see the touch areas on the face. They are very close to the rim, so I think the closer to the rim you touch the better the response will be.

I think there is a correlation to condensation in the bag

I'm not going to have a big rant on why a record was not given.... I wasn't there, I dont know. However I dont see how a battery lifetime can reduce a run by two knots - did the device turn off during the record run?

Here's how: Dean used to use GT-31s and had a bunch of them, but most of them died recently, so he was left with just one. He bought 2 GW-52s, and encountered a variety of problems with them (check Discgolf4's posts). When he used them for ice surfing sessions, he found that they would typically die in the middle of the day, way before the end of a session. So he used one GW-52 and one GT-31 on the record day. It turns out that the record rules on GP3S have not been updated since 2013, so they do not say anything about using 2 different GPS units.

He got 54 knot in a bunch of runs. The GW-52 died as expected, but not before it had recorded a number of 54 knot runs. But when he submitted the data from both units to the record committee, he was denied the official record - he was told that both data sets have to be from the same kind of device. Seems like a rather arbitrary and questionable decision, given that nothing in the official rules states such a requirement.

Ice Record Claim:

The record rules published state clearly that either two GT-31's must be used or two identical 10hz Ublox devices. There is no mention of the GW-52 in the currently published rules.

However, the new update of the rules pending approval include the use of two GW-52's.

After the claim by Dean, we are looking into what might be able to be done about his claim and if there is some technically acceptable way we can accommodate it.

Anyone who's is thinking they might be in a position to claim a record are highly recommended to contact the WGPSSRC before so, especially if they are wanting to use a device not mentioned in the rules, or have any other concerns that may need clarification.

5hz v's 1hz:

Enquiries to Locosys have confirmed that the device always processes data at 5Hz and writes an average of the 5 Hz data when in 1Hz recording mode. This was a bit of a surprise to us, but there it is.

This will be the reason why you will get very similar (but not identical) results from a GW-52 in 1 Hz mode and it also means that it would be perfectly acceptable to post data to the GPS-TC in 1Hz mode from this device. We will have the guidelines amended to reflect this.

The reason you will not get identical results is that the higher Hz, and why recording at higher HZ is preferable if memory capacity is not an issue, is that the analysis software will often be able to find a better segment of data points with a slightly higher speed average at 5hz. This will be the case if the speed average includes acceleration at the beginning and deceleration at the end. Granted, the difference will usually be minimal and probably insignificant in most cases, but by definition it will better reflect what the real situation was.

Accuracy at higher Hz

There was much discussion many years ago between Mal Wright, Tom Chalko, Manfred Fuchs, Chris Lockwood and others in the advisory group about the so called 'jitter' appearance in higher Hz speed graph displays. I was particularly concerned to understand what it was. The consensus was that it was very real variations in 3D Doppler readings. Since we had much more data in the 5Hz file, and were only concerned with the 2D speed in predominantly one direction, the small variations, presumably due to movements and vibrations, would cancel out in our case and produce very much more accurate speed data.

Regarding data and statistics:

The work was done by a number of different people, most of whom are mentioned above. Most of the communications were by email with links to data or attachments. Quite a bit was published on websites etc at the time but some or most has since been removed or is not currently visible. If you have specific questions, contact me and I may be able to point you in the right direction. You may also want contact Manfred directly and he may be able to supply you with links to his research, some of which was posted on his website pages.

It would be better for the integrity of this thread if we take this offline or start a new thread.

Andrew was quoting the manufacturer.

It does appear that you are singling out a specific person within this GPS community.

Why don't you go back and read Andrew's post, before posting such bulli**? Or at least look at the top of my original post, where I quote sailquik verbatim?

Look back at page 4 of this discussion, and you will find Andrew's post that formed the basis of this discussion:

I did go back. And I just went back again. I was addressing context of what was being discussed in your post which appeared to be about 5Hz accuracy vs 1Hz (within the GW52). Since it wasn't known what the device implemented, Andrew asked the manufacturer directly.

If anyone has data that show that the results of the GW-52 in 1 Hz mode are significantly less accurate than GT-31 data or 5 Hz data, I hereby ask politely to please send them to me (based on mathew's previous statement that such data would be made available if one asks politely). If email is easier than uploading to a public server, send me a private message here (Andrew and Manfred have my email address).

If anyone has two GW-52s and wants to help out, I'd certainly love getting some data with one unit in 1 Hz mode, and the other at 5 Hz. Even better if you also have a GT-31 to compare to.

You haven't actually shown the GW52 @ 1Hz to be more accurate than GW52 @ 5Hz - you have shown that the difference is small and that there is some type of ripple which we dont understand. Just as important - and I might have missed it - you haven't explained a reasoning why 1Hz is equally accurate [ vs. the alternative where a 5Hz track can find a better curve for the 2s/10s window ].

Note that that requesting info in public forum is probably the wrong way to contact someone directly - I would be surprised if Manfred, Mal, Tom, etc would read this forum anything more than just occasionally... or at all.

Can you take a picture from the PCB backside?

Would be fun to see what they used ![]()

Hmmm, well I did but it doesn't show much, the battery is glued to the back and I'm not game to try and prise it off.

The socket at the front is where the touchpad ribbon cable plugs in.

Unfortunately the micro usb socket I got has the output pins the same distance apart as the pins on the plug, whereas the original has the output pins spread over the width of the plug. I stuffed the replacement by trying to hard to spread the pins out, so I didn't short them out soldering the wires on.

I can't fins any on line that have the wider spaced pins, so I've ordered 5 more so I can experiment.

I think I need a finer tipped soldering iron and some dentists eyepieces.

That would explain why Locosys told us there is no room for a larger battery. It is the one that was designed to fit in the watch form.

It would require a remoulding of the case, but there does look to be enough room once you've done that.

good news is I managed to solder the new micro usb socket in.

Bad news, didn't fix the fault! Unplugged the connector to the PCB and replug, checked my work several times with a variety of magnifiers, to no avail.

Unit still works, but won't talk to computer, so may just wear it on my arm to keep track of the max speed and 5x10.

Then use the GT31 on my helmet for posting.

I am a newbie to the forum. I am curious that how to install GT-31 on the helmet. Is there a link that someone shares the photo?Is it useful to hear the real-time GPS speed through a headphone?

If you go back to page 5 of this thread, there's a pic of a helmet with the foam cut away for the GW52. I'm not a fan of big helmets as the increased area means more force on the neck in a crash.

I use a Gath, and there's a pic of it here.

www.seabreeze.com.au/forums/Windsurfing/Gps/GW52/?page=2.

I've just looped some straps through the vent holes and attached the Paqa Midi to them

I am a newbie to the forum. I am curious that how to install GT-31 on the helmet. Is there a link that someone shares the photo?Is it useful to hear the real-time GPS speed through a headphone?

Here is the picture of how Tom Chalko and I set up our helmets to mount 2 GT-31/GT-11's inside:

You can actually set the GT-31 up to have audio speed feedback as you sail and at the end of each run you can hear the speed reached.

It is quite audible when a GT-31 is mounted inside a helmet. Here is the description of it from the Mtbest.net website:

Speed Genie Sound: GT31 Speed Genie provides bio-feedback sound/light indicators: acceleration, max n-second average speed increase and also audio-announces the max avg speed result. Sounds are designed for GT31 mounted inside the helmet to provide sailors audio-feedback so that they can focus their eyes on sailing to improve safety and maximize speeds. LED bio-feedback is designed for arm-mounted GT31. Set (ALERT>SPEED GENIE>ON) to enable Speed Genie helmet bio-feedback. If you need sound, set (ALERT>BUZZER>ON). The bio-feedback is delayed about ~2 seconds, due to the limited bandwidth of the GT31 Doppler system. Please see README file for details.