Thanks Tony... back on track.

I've decided to send my broken GT-31 to Locosys. Hopefully get a few more years from them.

The user interface on the GW-52 seems to be a step back, IMO. Hopefully I won't have to mount it to my helmet

in order for it to function properly. ![]()

Fully agree regarding the user interface but in my case, I've joined the GPS sailing culture too late and only have the new devices as options. Glad to hear that you're back on track mate.

The user interface on the screen is too fiddly for sure but you should never have to use it except to start and stop the GPS which is easy to do. All else done in the utility and is very easy.

Discgolf4, You will get far better results of you mount the GW52 on your head or in your helmet. Direct sky view without interference make quite a big difference.

All the go-pros and cameras are optional extras! ![]()

There is a large element of the 'New and Different Technology' issue here. Every time we have moved to a new GPS device there was a flood of complaints that it was "too hard", or "too confusing", or "not nearly as good as the last one to use". Really, it is just mainly different. It take a little while to adjust your thinking and embrace the new way of doing things. Like programming a new VCR (or digital recorder these days I guess). Once you know how it ceases to be a mystery and you forget all about it and just use it. ![]()

Remember this when the next generation of GPS device comes along. It will be many of the same comments.

Have you checked out the Quick Start Guide here:

www.locosystech.com/en/product/gps-handheld-data-logger-gw-52.html

The user interface on the screen is too fiddly for sure but you should never have to use it except to start and stop the GPS which is easy to do. All else done in the utility and is very easy.

Discgolf4, You will get far better results of you mount the GW52 on your head or in your helmet. Direct sky view without interference make quite a big difference.

All the go-pros and cameras are optional extras! ![]()

There is a large element of the 'New and Different Technology' issue here. Every time we have moved to a new GPS device there was a flood of complaints that it was "too hard", or "too confusing", or "not nearly as good as the last one to use". Really, it is just mainly different. It take a little while to adjust your thinking and embrace the new way of doing things. Like programming a new VCR (or digital recorder these days I guess). Once you know how it ceases to be a mystery and you forget all about it and just use it. ![]()

Remember this when the next generation of GPS device comes along. It will be many of the same comments.

"There is a large element of the 'New and Different Technology' issue here. Every time we have moved to a new GPS device there was a flood of complaints that it was "too hard", or "too confusing", or "not nearly as good as the last one to use". Really, it is just mainly different. It take a little while to adjust your thinking and embrace the new way of doing things."

That and the fact that many of us on the GPS trail are old farts and 'change'.....woo hoo, not likely if we can help it.

Me, I didn't like this GW52 thing at all to begin with but it's grown on me and I haven't used the old GT31 for a long time now.

Just had to engage the young part of the brain and get into miniaturisation, touch screens, no instructions and all that other good 21st century stuff.

I like the small dimensions, easily fits into a water proof cell phone bag.

I get spikes again just like in the Garmin days. Did 109kts one day.

Peak speeds are real racy because they are for 0.2sec and then the software knocks you back to reality.

Not a bad piece and it looks like LocoSys made it specifically for the GPS community.

I found a "water sports" helmet that contains the GW-52 very well.

It was fairly expensive, but seems very lightweight. The fit is remarkable for a "one'size fits all" helmet.

Very good ventilation and an adjustable, ratcheting headband for a tight fit.

The foam is deep enough to handle the unit in a water-tight bag (or two).

Here is the website, if you're curious: www.forward-wip.com/en/1-pro-wip-helmet.html

Not sure how a mylar sail can block a signal that a plastic helmet won't... but I'm sure I'll find out. ![]()

Nice find Discgolf4! ![]()

Mylar sails don't block radio signal unless they are covered with a film of water (unlikely most of the time). The plastic on the helmet won't either.

At a recent snow sports trade show I inspected about 50 different models of Skiing and watersports helmets. The majority of them were well suited to adapting to hold at least one GT-31 or GW-52 in the lining foam. Many could be adapted to hold two, as is the case with my old ProTec snow helmet.

Hey Andrew,

Correct me if I am wrong but isn't the unit being in the helmet now totally blocked by the top of the helmet itself therefore negating your 'direct sky view' theory? You are seriously saying 2-3mm plastic isn't going to affect a signal but a clear sail with droplets of water on it is??? How is it any different then to wearing a unit in a plastic container (paqua for instance) on your arm that is constantly getting splashed and wet?

No Andrew, fortunately for us, radio signals are not blocked at all by plastics, in a similar way that light passes through glass. We have been using and advocating this setup since about 2007, originally tested and advocated by Tom Chalko and described here, before we even had the GT-31:

web.archive.org/web/20120217132229/http://mtbest.net/speed_sailing_helmet.html

But water is a very good blocker of radio signals. Since Monofilm sails do not hold water, they do not normally block radio signals either. Perhaps the older woven cloth sails might hold enough water to degrade signal but I doubt it. The plastic of waterproof bags does not hold water like a cloth does and has not been shown to degrade signal.

On the other hand, it has clearly be demonstrated many times, to the detriment of many unfortunate sailors, that soaking wet wetsuit material in combination with poor positioning that allows the body (90%+ water) to block signal can severely degrade GPS performance.

Likewise, because water is a very good blocker and reflector of radio signals, GPS's don't work at all under even a very shallow depth of water, and reflections off the water to upside down facing antenna can get severely affected by multi path GPS signal reflection that can severely degrade accuracy. This is why it is very important to do everything you can to prevent the GPS arm bag from rotating around and hanging under your arm.

Has anyone found an issue with the session duration times shorter then expected. My last two days' sessions have shown a duration of about 30 minutes shorter then I was actually on the water. Could just be wishful thinking ![]() Cheers for any info.

Cheers for any info.

Cool thanks for explaining ![]()

Have you ever seen those "radome" things that cover a radar-dish.... they dont block the signal significantly**, they do however stop the wind from blowing the dish over.

** it does block some signal, but it is usually considered an insignificant loss (normally measured in dBm or some other techy metric)... unless you are trying to listen for E.T.

Has anyone found an issue with the session duration times shorter then expected. My last two days' sessions have shown a duration of about 30 minutes shorter then I was actually on the water. Could just be wishful thinking ![]() Cheers for any info.

Cheers for any info.

Where is you're min speed set? if it's too high you could be missing data that way.

Just had a connection problem with my test unit. I'm sure no water has got into the pacqua, must just be the salt air.

Boombuster's fix of spraying some CRC into the socket and working the plug in and out a few times has restored mine.

From now on I'll try to remember to do this regularly, maybe once a month or so. It doesn't need much and I'd keep the spray away from the display.

Perhaps spraying onto the plug as boombuster suggests is the safest option.

Has anyone found an issue with the session duration times shorter then expected. My last two days' sessions have shown a duration of about 30 minutes shorter then I was actually on the water. Could just be wishful thinking ![]() Cheers for any info.

Cheers for any info.

Where is you're min speed set? if it's too high you could be missing data that way.

I've set it at 3 knts, so I wouldn't expect to lose 25% of my session, the same day I was out with you at Liptons. My session results indicated about 90mins but thought I was out for over 120 mins. Its no big deal. Just curious if I have a memory issue (on the GW52!). Happened again yesterday, but probably took a few more breaks to make equipment adjustments(ok breather).

It could be a big deal if your best results are missing, it would be really good to know if there's actually a problem here.

Make sure you have a GPS fix before you put the unit on, sometimes it can take a long time to achieve a fix when the unit is moving.

This could lop a bit off your session.

Maybe note the time between achieving a fix and when you put it back in clock mode.

Ok... another mystery.

My new GW-52 units will stay locked after a full recharge. I was extremely frustrated when one did it and quickly decided that it should be returned. I then tried to duplicate the glitch with my second unit and it too locked up after being fully charged. By "locked up", I mean that they could not be unlocked. 15 minutes of trying did nothing, but when left alone for an unknown period of time, they return to normal function and I'm able to unlock them. Any idea about what is happening here? Also, I'm unable to download the GW52 Undater/Installer... anyone had a direct link? What is the desired firmware? I was sent GT31_ALL_V1_4B0312B.S. Is this the latest as of now?

Short video of the Lock/Unlock issue:

Just had exactly the same.

2 days later and it was still locked.

Connected the unit to the utility and updated all the settings (to the same as before) and it all works now, although the time is out by an hour.

Fortunately I still have a couple of GT31s.

I won't be relying on this unit, too unreliable.

All the latest firmware is here.

www.locosystech.com/en/product/gps-handheld-data-logger-gw-52.html

I doubt the GT31 firmware is much good for the GW52

Quote from Manfred: "Running the GW 52 at 1Hz to match the GT31 also makes no sense because of the missing antialiasing filters - the units have to run with the highest frequency possible (GW 52, Thingsee: 10Hz) to avoid this, because simple decimation of the data without filtering renders the data useless..."

"renders the data useless" is, at best, an extreme overstatement. I would put it into the same category as "speed below 5 knots should not count towards distance". It's a personal opinion, which is based on some rational arguments, but it's not the one and only truth. Remember you are quoting a German engineer!

I'm pretty fed up with all the "our test show this and that and our rules are based on the results", without anyone ever bothering to make these data available. Setting up a WordPress or Google site to put the results of your tests takes just a few minutes.

Manfred has been a driving force behind going to higher data rates (5 Hz and 10 Hz). From the (mostly third-hand) talk I heard about this, I understand that the main reason to use higher data rates for measuring straight line speed (everything except alpha) is the reduction of random error through higher sampling rates. Assuming that measurement error is largely random, measuring at 5 Hz could give about 2-fold higher accuracy than measuring at 1 Hz.

If the GW-52 units would have proper filters when running at 1 Hz, then the accuracy at 1 Hz could be very close to the accuracy at 5 Hz. Simple averages would do most of the trick, Kalman filters (which are typically used in GPS units) would be even better. But I guess the comment about the "missing antialiasing filters" indicates that the GW-52 does not use such filters; instead, it may simply discard 4 of 5 data points, and log the 5th. This is the easiest way to implement 1 Hz recording on 5 Hz units, so there is a good chance it's done this way.

If we assume that the 1 Hz recording in the GW-52 is indeed implemented in this most simplistic way, the effect of the sub-sampling is that the error would increase by roughly 2-fold (square root of 5). Why anyone would call data with a 2-fold higher error margin "useless" is beyond me. Similarly, it is hard to imagine how the GW-52 can be significantly more accurate than the GT-31 or the Canmore at 5 Hz, but noticeably worse at 1 Hz. The only expected effect of using 1 Hz readings instead of 5 Hz reading would be that some teams might swap places in the monthly 2 second ranking (and perhaps in the alphas). The effect of running GT-31s in "power save" mode sure is a lot larger, and I did not see any warnings against that for years.

There are a few practical reasons to use 1 Hz recording, though. The 5 Hz data have a lot of high-frequency oscillations that don't really add to the analysis. In "action replay" mode in GPSAR Pro, you can't really follow the speeds at 5 Hz, the vary too quickly; nor does viewing tracks only around the current point work as well, since the region shown is 5x shorter. But more important is the limited data capacity on the GW-52 of only 129 thousand data points. That's 7.3 hours at 5 Hz, but 35 hours at 1 Hz. When I record at 1 Hz, and can recharge the unit between sessions from any USB charger or an external battery. When I record at 5 Hz, I must hook the unit up to a computer running Windows between sessions, or I loose data - most of my sessions are longer than 4 hours. Depending on where I am, that can be pretty darn inconvenient.

If you're wanting to recharge your GW52from a gigarette lighter/USB adapter, Locosys just advised it needs to be 5V 500mamp

This is Kate Yang,

Sales of LOCOSYS Technology Inc.

First of all, thanks for your support with our product.

Regarding your inquiry, please use the standard USB charge with voltage of 500mA @5VDC to charge the GW-52.

Thank you very much!

The older GT31 was 5V 850mamp

To look into the 1 Hz vs. 5 Hz accuracy issue, I went ahead and split a 5 Hz file into 5 separate 1 Hz files, each offset by 0.2 seconds. This gives a "worse case" scenario for 1 Hz data. The results with GPS AR Pro are:

Category - 5 Hz - 1 Hz range

2 seconds - 27.56 - 27.09 .. 27.66 (with 4 of 5 values 27.53-27.66)

10 seconds - 26.60 - 26.51 .. 26.66

5x10 sec - 26.07 - 26.03 .. 26.07

1 hour - 19.56 - 19.55 .. 19.57

n. mile - 22.04 - 22.01 .. 22.07

Distance 102.61 - 102.60 ..102.65

GPSResults gives accuracy estimate of +- 0.332 for 2 seconds, +-0.226 for 10 seconds, and +- 0.053 for the nautical mile for the 5 Hz data. That means all the sampled 1 Hz data are well within the range given (even for the worst 2 second sample, the range for the lowest 1 Hz value goes up to 27.42, and down to 27.23 for 5 Hz). In other words, the pseudo 1 Hz data obtained by discarding 4 of 5 points are statistically identical to the 5 Hz data for all 5 subsets. I'd say no big surprise here.

Practically speaking, the differences between the down sampled 1 Hz data and original 5 Hz data is wicked small (we are talking about a few cm difference here).

Boardsfr, I've had my suspicions about the 1hz data for a while, for a start when on 1hz the max display on the unit still seems to be the 5hz ripple max, So it looked like the unit was still sampling at 5hz. Both sailquick and I have asked locosys how the 1hz data is calculated.

The reply in both cases is that it's the 5hz data averaged out to 1hz.

I've also ran the GW52 at 1hz alongside a GT31 and got extremely close results.

I think Manfred got led astray on this somehow, was the original watch version different?

For alphas where the 5hz copes with the change in direction during gybes better, and for short times like the 2sec, I can see the advantage of 5hz, but I don't think there's much advantage for the other divisions.

Boardsfr, I've had my suspicions about the 1hz data for a while, for a start when on 1hz the max display on the unit still seems to be the 5hz ripple max, So it looked like the unit was still sampling at 5hz. Both sailquick and I have asked locosys how the 1hz data is calculated.

The reply in both cases is that it's the 5hz data averaged out to 1hz.

I've also ran the GW52 at 1hz alongside a GT31 and got extremely close results.

I think Manfred got led astray on this somehow, was the original watch version different?

For alphas where the 5hz copes with the change in direction during gybes better, and for short times like the 2sec, I can see the advantage of 5hz, but I don't think there's much advantage for the other divisions.

Same for me.

I've run GT31 and GW52 at 1 Hz for several sessions lately and pretty much the same speeds.

Also, no spikes at 1 Hz, which I do get at 5Hz

>>>>

I've run GT31 and GW52 at 1 Hz for several sessions lately and pretty much the same speeds.

Also, no spikes at 1 Hz, which I do get at 5Hz

Spikes?????? are you referring to the 5hz ripple? The peaks can be almost a knot above the average. They're not what are normally referred to as "spikes".

The reason you don't see them at 1hz is they've been filtered out in the GT31 and averaged out in the GW52.

Locosys says in the case of the GW52 the 5hz and 1hz data is the same, it's just that 5 points have been averaged to produce one.

While out today my GW52 developed heavy condensation on the inside of the display, and wouldn't unlock. Had to drive home with it still recording, but plugging it into the computer unlocked it. A long charge has now cleared most of the condensation and the touch screen works again.

I'm wondering if I should pull it apart to dry it out, or just leave it in the sun?

But the way it's going I could well be up for a Gryo when they come on the market.

- Price : TBA, aiming on €180,-

- Availability : depends on check and approval by GP3S and others

- Output : raw UBX, NAV-PVT and NAV-SVINFO (as required by GP3S)(1,5,10 and 18hz)

- Waterproof : TBA, depends on approval

- Communication: Micro-SD card up to 32GB, I use Linux ![]() (SDXC could work, but for now 32GB is fine)

(SDXC could work, but for now 32GB is fine)

- Settings: Via a config file, you can create this on my website : gearloose.nl/gps.html

- Battery: 1800mAh, on 18hz it runs 24hr

- Screen: LCD, 3 times the size of the GT-31

Looks great. No buttons on the unit? Can I get one to test?

Except for records, I don't see any advantage in 18 Hz. Just makes the files 18x larger.

Spikes?????? are you referring to the 5hz ripple? The peaks can be almost a knot above the average. They're not what are normally referred to as "spikes".

The reason you don't see them at 1hz is they've been filtered out in the GT31 and averaged out in the GW52.

Locosys says in the case of the GW52 the 5hz and 1hz data is the same, it's just that 5 points have been averaged to produce one.

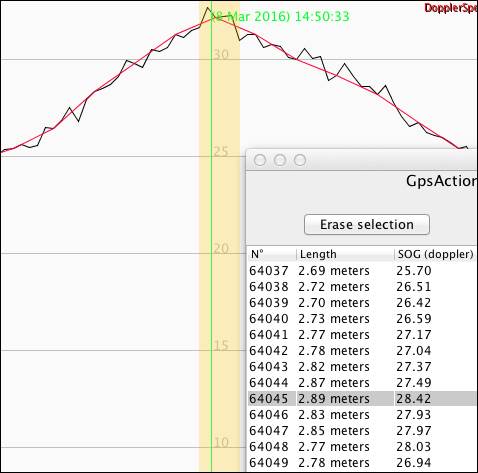

How much of the 5 Hz ripple is real, and how much noise, is an unanswered question, I think. Check out this section at 5 Hz and 1 Hz (down sampled):

One knot corresponds to 10 cm in 0.2 seconds (2 Hz). My arm may well move 5 cm forward and back in that time. If look for the max at 5 Hz, you may get lucky and find 2 spots near the max that are both above the average, and get 1/2 or a whole knot more speed. You really want to claim that's faster?

Imagine the curve being flatter, because you stay closer to max speed for longer. Then, it is very likely that your top speed is peak-to-peak in the ripples. Measuring it this way goes against calling it "average" speed over 2 seconds. Using raw high frequency data only makes the problems for 2 second data worse. And we know that the 2 second category is the one that is most problematic, since spikes and other artifacts influence it the most.

If the 1 Hz data on the GW-52 are indeed averaged, as Locosys says (and I have no reason to doubt their statement), then using the GW-52 at 1 Hz will give more meaningful 2 second results than at 5 Hz. For all other categories, the differences will be extremely small - I bet smaller than the differences from wearing 2 units on different arms.